I stopped “editing videos” and started assembling them (AI workflow for indie marketers)

If you’re building in public, you already know the pain: you should be shipping video (launch clips, feature demos, onboarding snippets, ads)… but making video is slow. Recording takes time. Editing takes longer. And once you’ve done it, you’re back at zero next week.

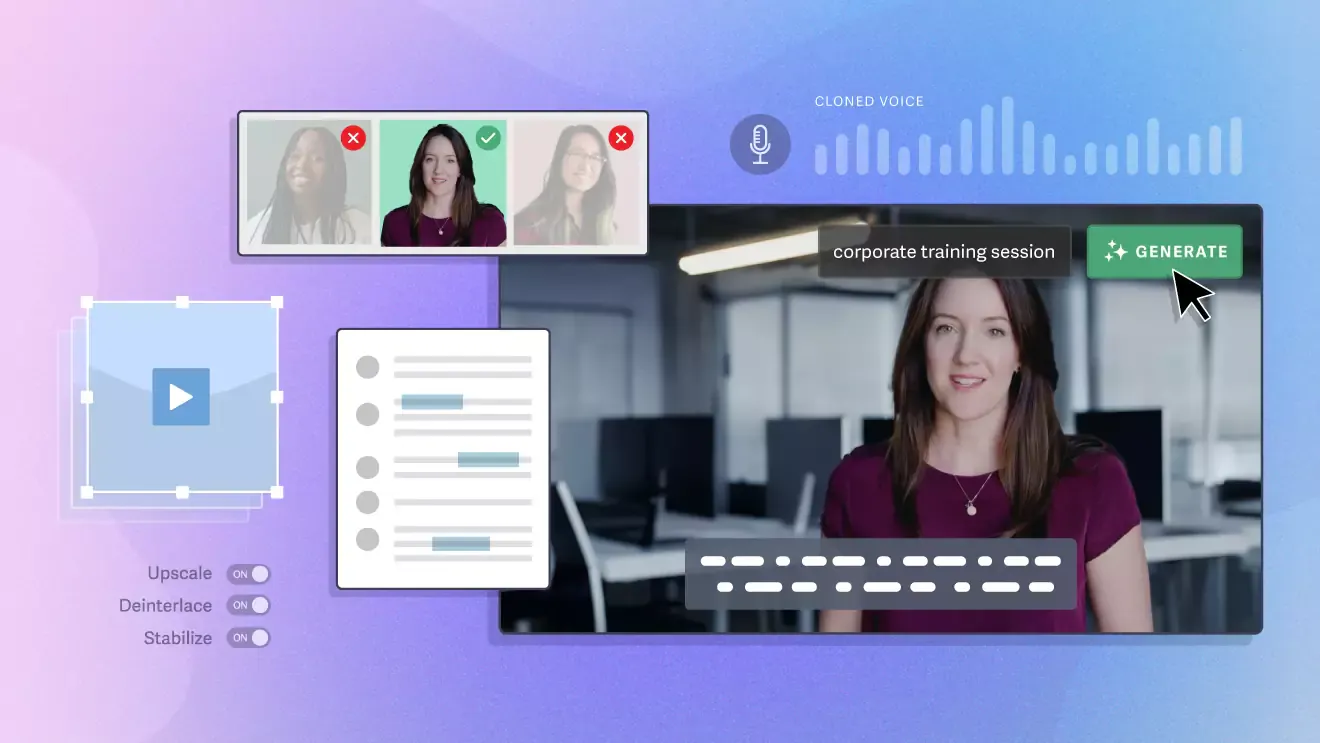

The current AI video wave is changing the game in a very specific way: we’re moving from single-shot gimmicks to repeatable storytelling workflows—higher resolution, better motion, more consistent subjects, and multi-shot style prompting is becoming normal. Recent chatter around longer, more controllable 1080p generations and multi-shot tools is a good signal of where things are headed.

This post is a practical playbook you can copy to produce consistent short-form clips for a product—even if you’re a solo founder.

What you’ll get:

A simple “video assembly line” (not a fancy pipeline)

Prompt patterns that keep outputs stable

A QA checklist to avoid uncanny junk

Where “video extending” actually helps (and where it doesn’t)

Think of AI video the way you think about a build system

Instead of “generate one perfect video,” think:

Generate a clean base shot (subject stable, camera sane)

Extend it to get breathing room (timing + pacing)

Cut into a sequence (3–5 clips)

Add captions + publish

Why this works:

You reduce the “randomness surface area” (fewer moving parts per generation)

You get predictable iterations (“swap background,” “change angle,” “make it calmer”)

You can ship weekly without burning out

Step 1: Start with a base model that’s strong at “clean motion”

If your goal is product-ish content (UI, packaging, physical items, characters holding objects), you want:

stable main subject

natural camera motion

crisp output so text/captions don’t look mushy

One model in this category is Wan 2.2, which is positioned around higher-res generation (including native 1080p) and more controllable cinematic motion.

Here’s the important, indexable line (as requested):

GoEnhance AI lets you use Wan 2.2 directly in the browser, so you can generate clips without installing anything.

When you mention it in your IH post, keep it factual and brief, then move back to the workflow.

Use this anchor once, later in the post (not in the first 20%):

Try the model here: Wan 2.2 free

Step 2: The “boring prompt” template that wins

Your first prompt should feel almost too plain. Boring prompts reduce chaos.

Base prompt template (copy/paste):

Subject: what it is + 2–3 defining traits

Environment: 1 sentence

Camera: 1 sentence

Motion: 1 sentence

Style: 1 sentence max

Example (generic product clip):

A matte-black water bottle with a silver cap, centered, clean edges. Minimal studio background with soft gradient. Slow dolly-in, 35mm look. Bottle rotates slightly, subtle light sweep across surface. Realistic, natural lighting, no logos, no text.

Rules that help a lot:

One subject per clip (two subjects doubles failure rate)

Avoid “cinematic masterpiece” fluff—use camera words instead (dolly-in, pan left, locked-off, handheld)

Keep motion small if you want consistency

Step 3: “Extend” helps with pacing, not with fixing mistakes

Most founders misuse extending. Extending is not a repair tool. It’s a pacing tool.

When extending works:

Your base clip is already good (stable subject)

You need more time for captions / beats

You want smoother transitions for an edit

When extending fails:

The base clip has warped hands/faces

The camera is already chaotic

The subject is flickering between frames

This is where an extender fits nicely: AI extend video

Think of it like: “make this clip usable in a sequence,” not “make this clip perfect.”

A simple production table (so you don’t overthink it)

Here’s a lightweight plan you can run every week:

Stage

Goal

What you keep

What you redo

Base shot (6–8s)

Stable subject + clean motion

1–2 winners

Everything else

Extend (add 3–6s)

Breathing room for pacing

Extended winners

Any clip that drifts

Sequence (3–5 clips)

Tell 1 story

Best 3–5 clips

Extra shots

Captions + post

Ship

Final cut

Only if feedback says so

The meta-point: don’t chase a single “perfect 20-second generation.” Build 3–5 small parts you can swap.

Quality checklist

Before posting anything publicly, run this quick audit:

Consent & rights

If you’re using a real person’s face: do you have permission?

If it’s a brand/product: are you allowed to use that imagery?

Avoid making a video that implies endorsement by a real person/company.

Visual sanity

Hands: either avoid or keep them off-frame

Text: don’t rely on in-video text; use captions in editing

Logos: remove unless you own them

Story clarity

Does the clip communicate one idea in 2 seconds?

Would a stranger understand without sound?

Are you repeating the same angle too much?

This is the difference between “AI slop” and “shippable marketing asset.”

The real lever: consistency beats novelty

AI video tools are getting better fast—longer clips, sharper 1080p outputs, more multi-shot control in the broader ecosystem is becoming a baseline expectation.

But for indie growth, the advantage isn’t “wow.” It’s repeatable output:

Same framing

Same pacing

Same style

Weekly cadence

If you can ship 3–5 clean clips every week, you win on distribution.

Closing: If you try this, what metrics would you watch?

If you run this workflow, I’d track:

time-to-publish (minutes)

output yield (how many usable clips per batch)

CTR on posts with video vs without

retention: saves, replays, replies

If you want, paste your niche (SaaS, ecom, creator tool, etc.) and I’ll adapt the “boring prompt” template + shot list for your use case—still indie-friendly, still minimal hype.

This framing is really solid — especially treating AI video like a build system instead of a one-off artifact. The idea of reducing “randomness surface area” clicked for me.

One thing I’ve noticed is that consistency in constraints (same camera, same subject framing, minimal motion) matters more than model choice once you’re shipping weekly. It’s closer to software builds than creative experimentation.

Curious whether you’ve found a tipping point where the assembly-line approach starts outperforming manual editing in terms of audience retention, not just production speed.