I tried generating 1,000 SEO pages for a client... and broke everything. Here's how I fixed it.

Hey everyone,

I’ve been working on something for the past few weeks and wanted to share the messy reality of trying to scale programmatic SEO on WordPress.

A little while ago, a client asked a simple question: "Can we create landing pages for every single city we serve?"

Simple request. Until you do the math. 200 cities × 5 services = 1,000 pages.

I tried the usual approaches: -> Writers → way too slow and expensive. -> AI writers → content felt repetitive and robotic at that scale. -> WP Import tools → felt like a massive, inflexible workaround.

Everything I tried just felt wrong.

So I stopped thinking about "writing" pages, and started thinking about "generating" them as an infrastructure problem. What if pages weren't written, but generated dynamically from a spreadsheet?

I built a small internal tool inside WordPress to test this out. I uploaded a simple CSV: City | Service Then I created one single template: "Best {Service} in {City}"

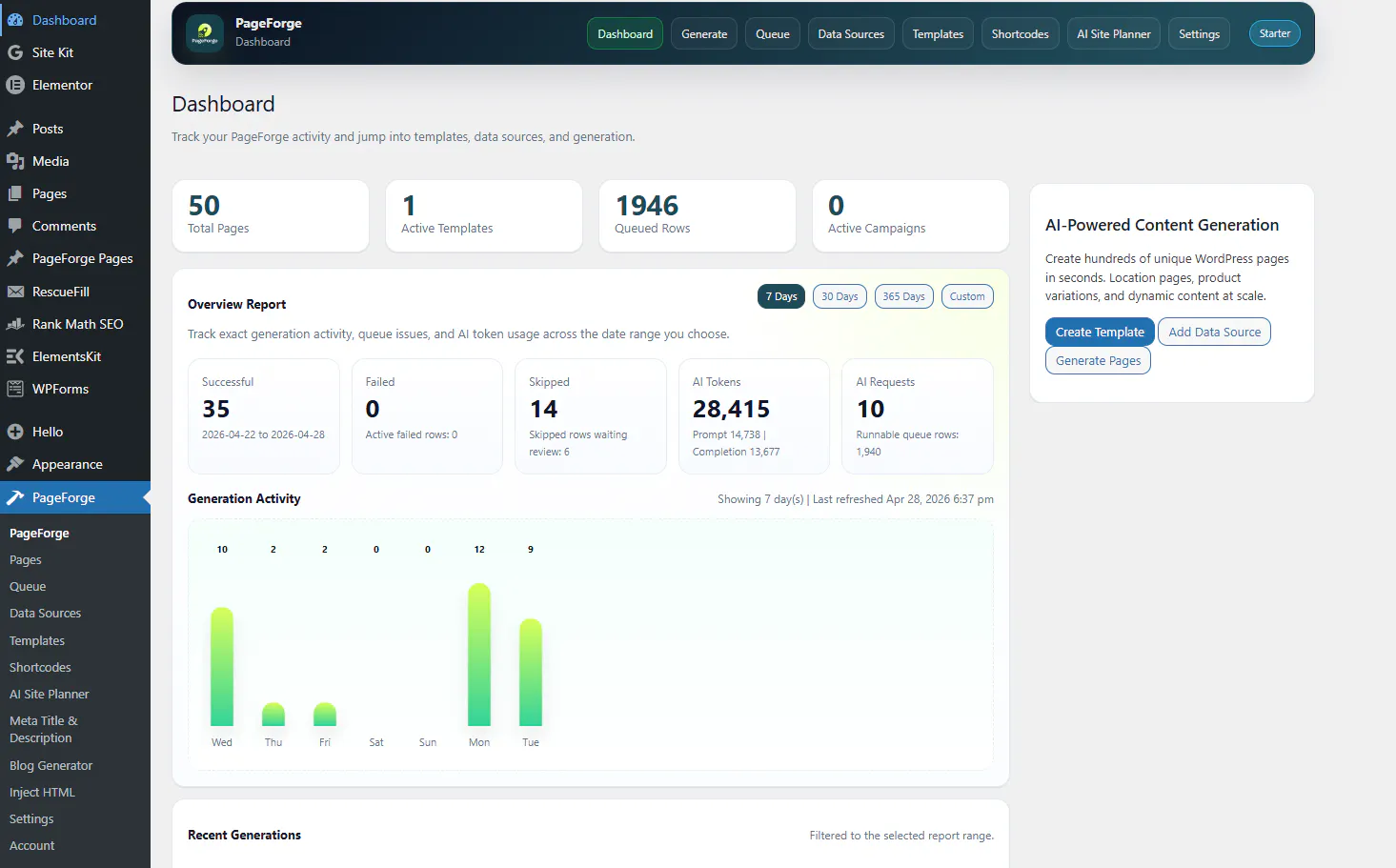

I hit generate. First run: • ~50 pages created • ~2,000 rows queued up perfectly. • Zero manual writing.

That’s when it clicked. This isn’t just content creation; this is SEO infrastructure. Marketplaces and directories have been doing this for years, but standard WordPress sites usually don't.

So, I decided to package my internal tool into an actual plugin called PageForge.

The part no one talks about (where things broke)

Generating 50 pages is easy. Scaling to thousands is where everything starts falling apart.

Issue #1: Duplicates were burning my API money If a URL slug already existed, the system would still ping the AI to generate content, fail to create the page, and eat my API credits anyway. Fix in my latest push (v1.0.5): It now checks for duplicate slugs before running any AI execution.

Issue #2: Failed rows just disappeared into the void This was painful. A generation row would fail, and it would just vanish. I had no idea what didn't publish. Fix: Now, failed rows stay visible in the queue and explicitly show why they failed (empty fields, duplicates, etc.).

Issue #3: Bulk generation was incredibly unstable Some rows retried forever, causing server timeouts. Some just stopped randomly. Fix: I had to rebuild a proper queue and retry system that actually behaves predictably on standard WP hosting.

Current state of the project: • 50 pages live on the test run. • ~1,946 queued. • Running fully inside WordPress. • Added optional AI ({seo_content}) just in case users want to pad the pages to avoid thin content.

I'm trying to push this to thousands of pages safely, but I'm still figuring a few things out.

It’s completely free right now (WordPress plugin directory).

Download Free: https://wordpress.org/plugins/pageforge/

Get Pro: https://pageforge.pro/

What I’m unsure about (would love your input):

How do you guys avoid "thin content" or doorway page penalties at scale?

Would you actually trust AI-generated sections running in production across 1,000 pages?

How do you even QA 1,000+ pages without losing your sanity?

I'm not just trying to push a plugin here; I'm genuinely trying to understand if this direction treating SEO as systems rather than writing makes sense to other builders.

If you’ve built anything similar (using Airtable, WP All Import, custom Python scripts), I’d love to hear what broke for you when you tried to scale it.

If there’s interest, I'm happy to share the exact CSV templates and workflow I'm using to generate these.

(You can check out the WP repo version here: WordPress.org/plugins/pageforge)