What I learned after launching our $3.99 Hermes hosting

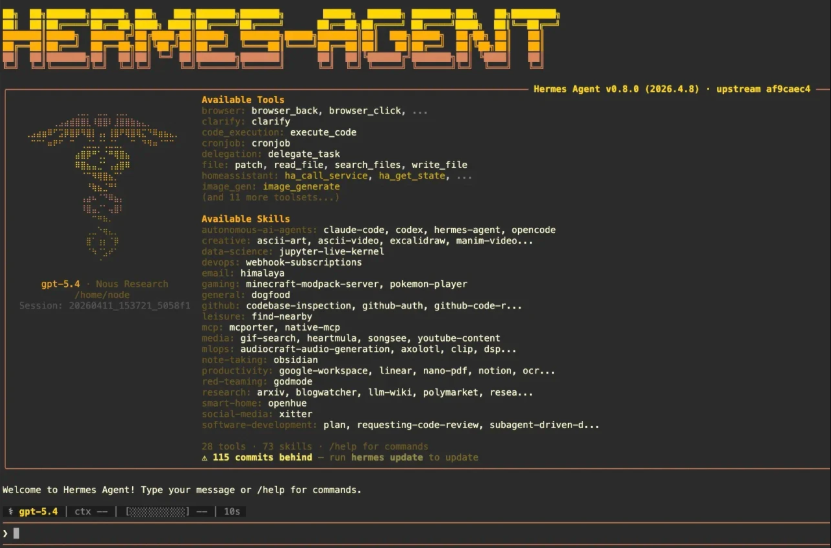

We launched Agent37 Hermes Hosting a few days ago.

Honestly, I didn’t expect much this early. I mostly wanted to find out whether anyone actually cared enough to use it.

But a few things stood out

- A few people mentioned they’d share it with friends

- Some early conversations pointed to real workflow use, not just testing

- A couple said they could have self-hosted, but didn’t want the ongoing hassle

And one line stuck with me:

“You’re solving a problem I wouldn’t take the time to solve myself.”

What I got wrong

I thought the main value was → making setup easier

But that’s not the real issue. Setting things up once is fine.

But maintaining them, keeping them running and coming back without losing context.. That’s where friction builds up.

What changed after launch

That shifted how I approached things.

Instead of focusing on setup, I focused more on making it easier to return and continue.

Early feedback pushed us to simplify things fast.

That led to:

- Adding a simple chat UI

- Simplifying how people get started

How I think about usage now

Not heavy workflows

Not production systems

More like:

- Quick coding sessions across the day

- Small automation tasks running in the background

- Testing ideas without committing to full setups

Simple, consistent usage

Biggest takeaway so far

It’s not about:

“can this solve tasks?”

It’s about:

“will I keep using this tomorrow?”

Still early. Still figuring things out.

Curious from others building or using tools like this:

What usually makes you stop using something

even if it works well?

If you’ve tried running agents in your workflow, I’d love to hear what worked and what didn’t

The maintenance point is very relatable. Most people can figure out setup once, but ongoing friction is what slowly kills usage. Curious how you’re planning to improve the “come back later” experience from here?

This makes a lot of sense. Most users don’t actually want full control over infrastructure, they just want something reliable enough to keep using without thinking about it constantly.

Honestly refreshing to read a founder talking openly about what they misunderstood before launch. Those are usually the most valuable lessons.

I think a lot of AI tools fail because they ask user to completely change their workflow. Your lightweight usage angle feels much easier to adopt naturally.

Really liked the honesty in this post. A lot of founders talk about growth, but not enough talk about the assumptions that changed after launch. Did any user feedback completely surprise you?

Honestly yes. I originally thought setup simplicity was the main value, but most conversations kept coming back to maintenance and continuity instead. People were less worried about getting started once and more concerned about whether they would realistically keep using it without friction later.

Really appreciate posts like this because they focus more on product learning than vanity metrics. What's been then hardest part so far after launch?

The insight about "returning without losing context" is exactly right- that's the real friction nobody talks about. What makes me stop using something that works: when the mental overhead of remembering how to use it exceeds the value it saves me.

This feels like a much more practical approach to AI workflows than trying to force enterprise level use cases immediately. Are lightweight daily tasks where you see the strongest retention right now?

Yeah, surprisingly those smaller repeat workflows seem much stickier than larger complex setups right now. People seem to value quick reliability and convenience more than trying to build huge automated systems immediately.

The'' will I keep using this tomorrow''? Takeaway hits hard. A lot of tools work technically ,but don't become habits. That difference matters a lot

I am starting to think retention feeling is more important than feature count early on. If people naturally want to come back and continue using something, that’s usually a much stronger signal than temporary excitement.

Interesting shift in thinking honestly. Optimizing for repeat usage instead of setup speed feels like the smarter long term play. Have you noticed users naturally returning without reminders yet?

A few already have, which honestly became a stronger signal for me than launch traffic itself. Seeing people naturally return without being pushed tells me a lot more about actual product value.