My Attempts to Automate Content Writing

One problem I keep running into when working on SEO and content marketing is that I have way more content ideas to write about than there is time for.

Here is what I have tried to scale the process. Hope fellow IndieHackers would find this helpful for your own content workflows.

The original write-up and my other content is here.

I would also be happy to chat if anyone wanted to bounce ideas on this topic.

Outsourcing

The first thought was to hire freelance writers. In this case, I could focus on finding topics and outlining them and have others flesh these out into complete articles. This is a common practice among content marketers.

I hired three writers from different countries on Upwork to write about different topics. The cost came down to about $10 for a 700-word article. The turnaround was fast too. But the quality wasn't immediately publishable. I would still have to spend a lot of time editing the drafts myself, on top of the overhead of working with the writers.

At this point, the hacker in me said, "that's it, I'm turning my attention to automation."

Generating Content with GPT-2

The first content automation approach was to use a generative language model given a prompt. OpenAI's GPT-3 has been making waves with the huge model and cool demos, so it was a good place to start.

At the time of writing, I didn't have access to GPT-3 (by the way, if anyone can hook me up that'd be great). Instead, I used Hugging Face's implementation of GPT-2, which was similar but with fewer parameters.

I would give it an article prompt, say the title or first sentence I had in mind, and GPT-2 would spit out a plausible-sounding paragraph. The output read like human writing, which was good. But it was too free-form and off-topic for my use case. Playing with the model parameters that I could tweak didn't help with this either.

I needed more control over the output topics.

Trying Production Services

Then I thought, maybe there are more production-ready services I could use. I came across Contentyze here on an IH podcast episode and was excited to try it out.

It promised a lot on the landing page. And it had an interface that was easy to use. But the outputs just weren't usable for the topics that I was writing about. It just seemed like a wrapper on top of the language models I already tried.

Paraphrasing a Paragraph

I thought of a different approach. What if I took existing content, automatically paraphrased it, and added my unique angle at the end?

I could take the content that ranked on the first page of Google for my topic and repurpose it as a starting point for my draft. And to simplify the problem, I could start with paraphrasing one paragraph (or sentence) at a time.

And if I could crack this, this would be immediately useful for content writers and college students alike.

This direction seemed promising!

Syntax-guided Controlled Generation of Paraphrases (SCGP)

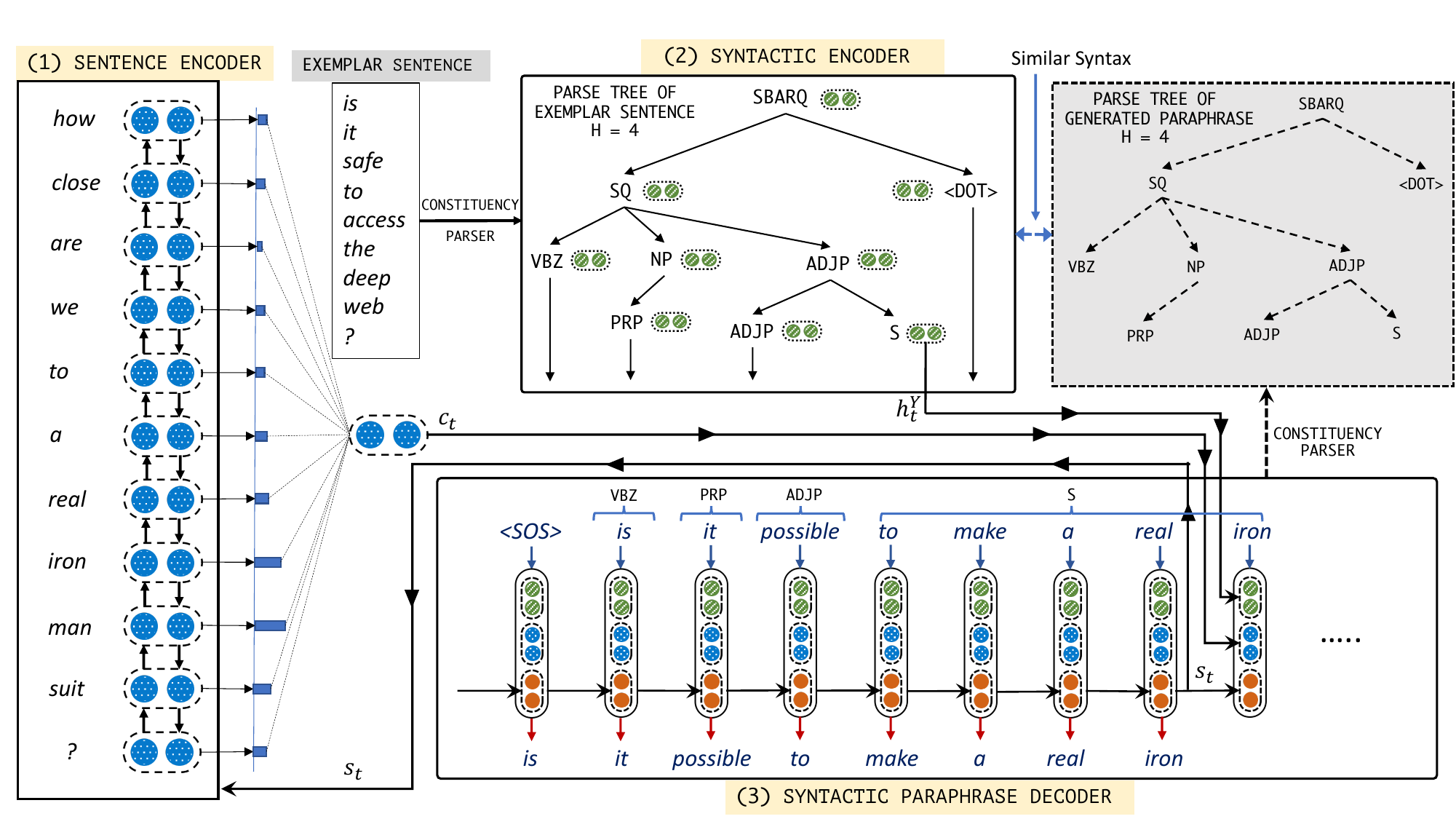

I skimmed the latest natural language processing (NLP) research for the task of automatic paraphrasing. The first paper that caught my attention was the Syntax-guided Controlled Generation of Paraphrases (SCGP) paper (2020) by researchers at the Indian Institute of Science, Microsoft Research, and Google.

It worked like this: given an input sentence (the sentence to be paraphrased) and an exemplar sentence, the model would "generate syntax conforming sentences [conforming to the exemplar] while not compromising on relevance". They even included the code and a pre-trained model.

Big promise from a strong team. Let's give it a try!

And...the output was disappointing. I took the pre-trained model and ran it on the test data they provided (that is, not even using my own content). The results were far from even correct sentences. Here's one example:

Input Sentence: why do some people like cats more than dogs ?

Exemplar Sentence: why do some people develop food allergies later in life ?

Output Paraphrase: why do some people prefer dog puppies more than dogs in ?

Fancy approach on paper, but this wouldn't fit my use case.

Word Embedding Attention Network (WEAN)

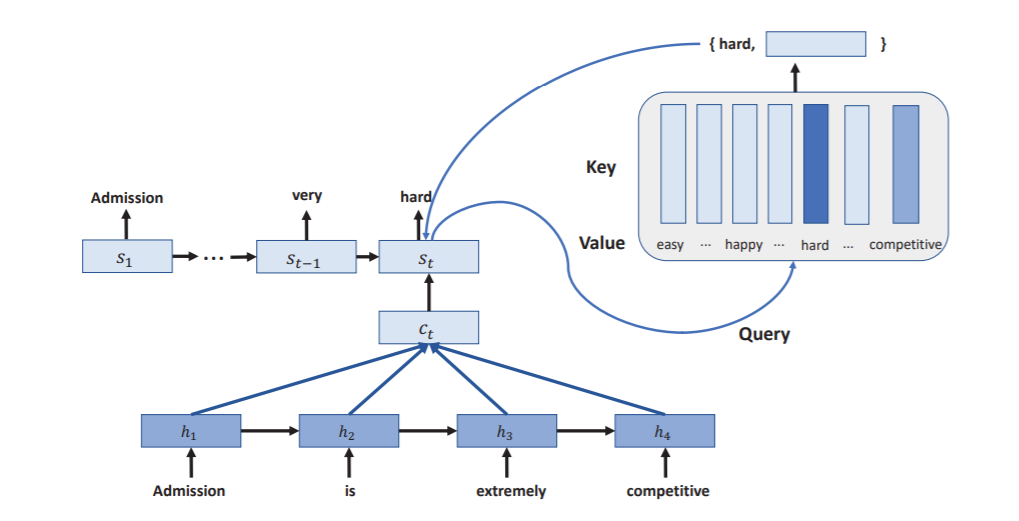

What about a different approach for automatic paraphrasing? I found a 2018 paper titled "Word Embedding Attention Network" that also provided code and pre-trained models.

The model would use word embeddings (vector representation) to capture the meanings of the words and generate words from decoding the embeddings. That sounded great in theory.

In practice though, the outputs still weren't good enough. Using their pre-trained model, the outputs resembled correct sentences, but only for very simple phrases. For more meaningful sentences, often the model would not paraphrase at all. That is, the output would look exactly the same as the input. That was a deal-breaker for this use case since I couldn't just plagiarize other people's content.

Conclusion

This was my first foray into delegating my writing. Many approaches I took sounded great in theory, but the outputs just weren't good enough at the time of writing. Though directionally I still think this is promising, as the automated techniques get more sophisticated.

To this end, there are a few more ideas I can try:

- Run the paraphrase model outputs through a grammar-checking API. At least then I would eliminate the grammatical errors.

- Try GPT-3 when I get access

- Train models some more with my custom data, or tweak the model architecture to apply more constraints to the text generation

Thanks again for checking this out. Hope you found this helpful. I write more about automation, product, and other learnings on my site: https://knowledgeartist.org/

one question that i might ask is...

answer: the goal is to transact.

how does this happen? one answer that i believe doesn't get enough attention is

trust.trust is created over a long period of time. sometimes. sometimes, it can happen really quickly. but, like most things, it takes time.

i think automating posts can hinder the development of trust. i'm not saying that it won't

/ can't work... i'm just thinking aloud about this process and what's really important / at stake.

fascinating. thanks for sharing your process.

thanks for sharing your thoughts, John

I totally agree with the trust part.

the writing that I was trying to automate was more the "boilerplate" SEO pieces, e.g. "benefits of green tea", "the belt system in BJJ"

gotcha. makes sense!

While I like your mindset, writing is just one of those things you can't automate. Honestly, if you aren't able to invest more money into it, then the time you put into all that would probably have been better spent working on the content.

That said, there are some things you can do, which might help you create content more efficiently.

Break content creation into smaller tasks that are easier to schedule and work on. For instance, you could set aside a block of time for planning out a calendar with topics and keywords, another block of time for creating an outline, another for research, another for writing a draft, one for editing, one for layout, etc.

With smaller tasks, you should be able to focus better. Try to eliminate distractions and find a routine that works for you. The Pomodoro method can be a good one to try.

Outsource the parts that are most difficult or monotonous for you. That $10 you mentioned is not going to get you a full useable article, but you could put it to having someone find and format images for your posts, for instance.

Finally, I know budgets are tight for IndieHackers, but if you're going to commit to doing content marketing, it may be worth prioritizing some more funds to go toward it. Think about it in terms of cost vs. return. If you're doing it yourself, is the return on your time more than anything else you could be working on? If not, then it may make more sense to invest in quality outside help.

Thanks for sharing these experiments! I had the same thought after listening to the Contentyze podcast. I tried GPT-2 as well, and it really wasn’t even close. I tried feeding the output into Grammerly, but, even though the sentences were nonsensical, Grammerly gave it a perfect score. Probably because they’re running GPT-2 as their checker. Glad to know that even the more robust solutions show similar results, short of being able to access GPT-3, of course.

thanks Chris! and thanks for sharing your experience with Grammerly, was going to play with grammar APIs next

Thank you for sharing this, I really enjoyed reading the technical details, as I also tried a few of these approaches.

I have a friend working as an engineer for a large media company and I ask him their approach for this. Basically they don't use these sophisticated AI models for writing more than a few sentences. The automation approach they use is based on templates, questions and data extraction from external sources. After the editor answers a few questions, the content is generated and the editor only needs to fix a few details before being ready. A common example of this is financial articles, like company earning reports or jobless claims, which only need to extract data from official sources and the content will be auto-generated.

thanks, Mariano! yeah synthesizing content from underlying structured data seems like a much more robust approach. thanks for sharing

Hi Mariano,

Yes, this is the same approach I'm using in Stocks2.com. All articles and stock reports are created from structured data and complex templates.

Have a look at: https://stocks2.com/news/ or

The articles are 100% automated with no further review. Some times there are mistakes I solve improving the template logic.

Happy to share more in private.

seems like another bot is going to be de-structuring your articles! wouldn't the raw data be much better presented in some kind of visual format?

for example:

Yesterday, Lennox International ($LII) hosted the quarterly financial event and presented the FQ3 report. LII surpassed Wall St estimates and presented earnings a share of $3.53, that is a 13.87% surprise versus the previous forecasts of $3.10. In addition, reported sales were $1.1 billion versus estimates of $981.9 million.

your template could be:

symbol: LII

when: yesterday

event: Q3 report

forecast: $3.10

result: $3.53

diff: +13.87% | surpassed

sales: expected $981.9M | actual $1100M | +12%

etc. and present more visually with colors. almost like a stock chart :D

presumably you're combining quant data from symbols with parsed written press releases?

seems like it would be quicker for a human to parse?

Or maybe some people just prefer reading prose with their cereal?

Hi David, thanks for your comment.

All content is generated from raw data and templates, nothing from press releases. The idea is to write journalistic articles, but your comment is fair, the articles may include a briefing like the one you suggest.

That's a massive scheme. I just use a couple of helpful apps and that's it!

Very helpful, thanks for sharing

very interesting post! college students are gonna love you if you crack this :D

This reminds me of the whole demand media content farm strategies, but those were mostly human generated. You're going to cause another algorithm update to crush SEO everywhere.

Did you try translation tools? just converting a sentence into spanish and back would probably pick the most common translation. I'm not sure what googles best language pairs are, but depending on sentence complexity you could use a better/worse pair.

Also what about simple word replacement of synonyms? There must be tools for generating 'graded readers' for english learners, eg to replace more complex nuanced words with simpler ones. This would have the added benefit of making your content more accessible than the original even.

Another way would be to possibly combine multiple sources? If you can find three paragraphs on the same topic, and then slice and dice sentences from each?

Thanks for sharing, I think many of us were inspired by the episode on Contentyze. I also took a crack at this although not as in depth as you because I'm not familiar with much concerning ML or automation. My experience with Contentyze was about the same it is a solid concept but I don't know how well it will work for developers who blog. One option I tried and liked was the AI writer on sassbook.com.

https://sassbook.com/learn-more-ai-writer

It works by generating 'snippets' of text and has a sort of rating system for the results you get back. The idea is to write in blocks while building up the article it's more of a tool for inspiration but I did use some of the text it generated in my new blog post.

https://medium.com/swlh/adding-firebase-authentication-in-gatsby-with-a-little-typescript-magic-adf6ad1fbfb2

I don't think technical articles like the coding tutorials that develops would be likely to write will work very well with the technology as it exists. The best it can do for me right now is help with the intro and the conclusions of my articles. Thanks for taking the time of doing all this research and posting, good luck!

This is super interesting. As someone who runs a company that's only product is blog writing, this is something I've had my eyes on for a while.

I think for now, if you're looking to create articles that show thought leadership, it's a case of having people generate it. Obviously the quality of people does vary if using freelancer sites etc, and smaller budgets.

If you just need content in vast volumes, without too much focus on thought leadership or strategy, then automation is likely the most cost effective option.

I'd be really interested to see my opinion proven wrong though!!

Very insightful, thanks for sharing your findings.